Table of Content

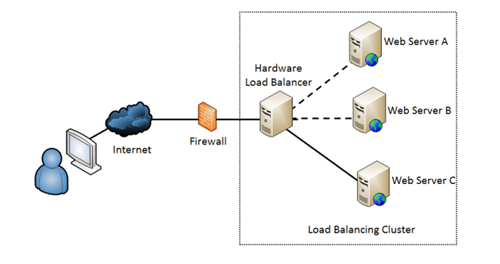

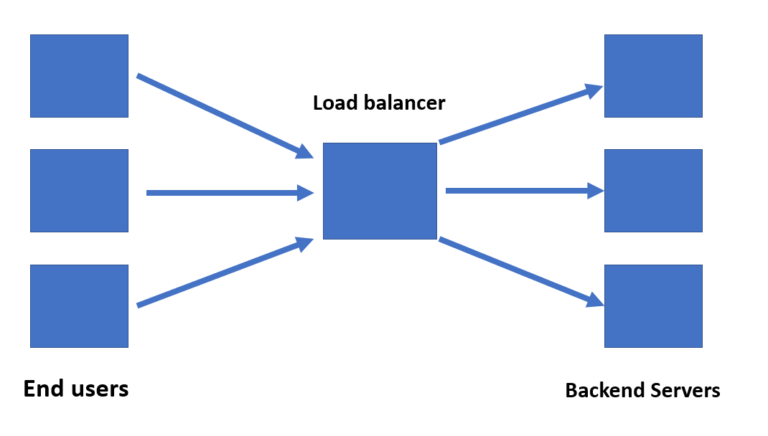

Listening guidelines can be configured to get rid of heavy visitors amongst ECS instances which would possibly be attacked as backend servers to SLB situations. The server’s load balance makes use of this session persistence functionality to recheck all requests from the identical shoppers to the identical backend ECS instance to improve access efficiency. A load balancer acts as a grasp and settles in entrance of the servers. It expertly routes the incoming request amongst numerous servers which might be able to processing these requests and ensures to maximise the speed and capability utilization. It additionally makes sure that no server is over-worked which could hamper the efficiency. If any single server goes down, the load balancer mechanically routes the visitors to the remaining up-servers.

Therefore compromise should be found to best meet application-specific requirements. If you're focused on uptime, load balancing between two or more similar nodes that independently deal with the visitors to your web site allows for failure in either one without taking your website down. Load balancing additionally will increase the reliability of your web utility or web site and permits you to develop them with redundancy in mind. If considered one of your servers fails, the visitors is strategically distributed to your different nodes with out interruption of service. There are two kinds of load balancer software program, specifically commercial and open supply.

What Are Load Balancing Algorithms?

By dividing the tasks in such a method as to give the identical amount of computation to every processor, all that remains to be done is to group the results together. Using a prefix sum algorithm, this division may be calculated in logarithmic time with respect to the number of processors. If the duties are independent of one another, and if their respective execution time and the tasks can be subdivided, there's a easy and optimum algorithm. In the context of algorithms that run over the very long term (servers, cloud...), the pc architecture evolves over time. However, it is preferable to not have to design a brand new algorithm every time. Hash – Distributes requests using a key you specify, such because the client IP address or the request URL, using the hash algorithm.

Execution of the newly added servers during heavy visitors enhances the applying efficiency and the new assets get mechanically augmented to the load balancer. To configure NGINX as a load balancer within the HTTP part, it's required to specify a set of backend servers with an upstream block. NGINX is a popular web server software that's put in to reinforce the server’s useful resource availability and efficiency. In any load balancer, NGINX usually acts as a single entry point to a distributed net software executing on several separate servers. Distribute the incoming requests or community load effectually amongst a number of servers and work to enhance the efficiency.

What Are The Benefits Of Server Load Balancing?

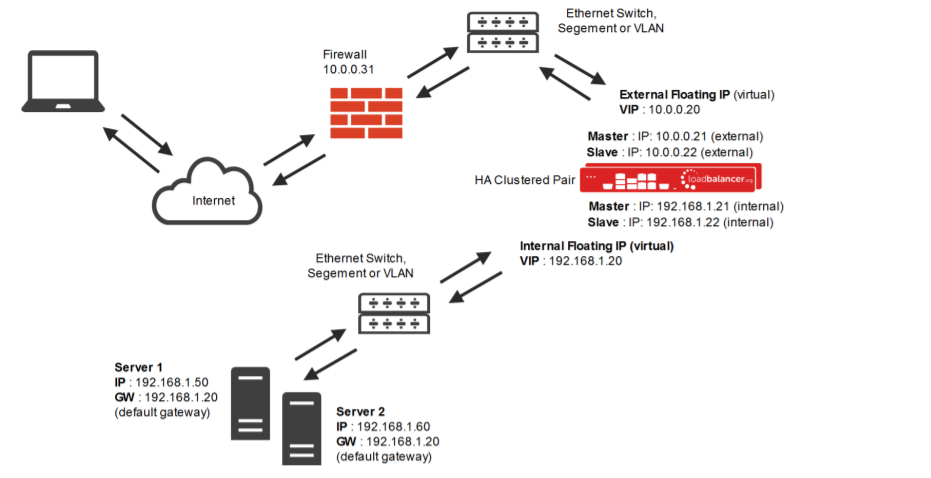

You can store it in your data facilities and use virtualization to create a number of digital or digital load balancers you could centrally handle. Weighted least connection algorithms assume that some servers can handle extra active connections than others. Therefore, you'll find a way to assign completely different weights or capacities to every server, and the load balancer sends the new client requests to the server with the least connections by capability. HTTP server load balancing is a simple HTTP request/response architecture for HTTP traffic.

The net front-end contains the graphical user interface of an internet site or application. The use of load balancing requires additional configuration in maintaining a steady connection between the consumer and server. And additionally you presumably can reconfigure the load balancer if there's an array change in the downstream cluster. If there's a failure in sending visitors to two or more servers and one server fails.

Clustering offers redundancy and boosts capacity and availability. Servers in a cluster are aware of each other and work together toward a common objective. Instead, they react to the instructions they obtain from the balancer. Software load balancers usually are easier to deploy than hardware variations.

SLB has some elements that ensure the seamless operation of servers. Consider a site suddenly turning into extremely in style, with everyone clicking on it to go searching or make purchases. The server ought to have the flexibility to deal with a surge in incoming visitors and respond to each one without detecting server deterioration. The efficiency of this strategy decreases with the utmost size of the tasks.

Cara Kerja Load Balancing

It does this by distributing the traffic among the many community adapters linked to a switch or a port group on a virtual switch. To do their work, load balancers use algorithms—or mathematical formulas—to make choices on which server receives each request. Algorithms vary and bear in mind whether site visitors is being routed on the network or the application layer. Software load balancers can be found either as installable solutions that require configuration and management or as a cloud service—Load Balancer as a Service . Choosing the latter spares you from the routine maintenance, administration, and upgrading of domestically installed servers; the cloud provider handles these duties.

The number of load balancers is adjusted to the very best traffic required, this load balancer can deal with large amounts of traffic. Load balancing strategies basically serve as the director on a big-time movie set. Dynamic load balancing algorithms look at the present state of the servers before distributing site visitors.

Benefits Of Load Balancing

Adapting to the hardware structures seen above, there are two major categories of load balancing algorithms. On the opposite hand, the management can be distributed between the totally different nodes. The load balancing algorithm is then executed on each of them and the accountability for assigning duties (as properly as re-assigning and splitting as appropriate) is shared. Upgrading from a single server to a twin server configuration will only permit for a lot progress. It is useful when your backend database is receiving a ton of requests and desires its own resources to handle them.

A slow, faulty network can influence important enterprise companies and lead to a poor end-user experience. For instance, Terminix, a world pest management brand, uses Gateway Load Balancer to handle 300% more throughput. Second Spectrum, a company that gives synthetic intelligence-driven monitoring know-how for sports activities broadcasts, uses AWS Load Balancer Controller to reduce internet hosting costs by 90%. Code.org, a nonprofit dedicated to increasing entry to laptop science in colleges, uses Application Load Balancer to deal with a 400% spike in site visitors efficiently during online coding occasions.

The complete load balancing capabilities in NGINX Plus enable you to construct a highly optimized utility supply community. Hardware load balancers include proprietary firmware that requires maintenance and updates as new versions and safety patches are launched. Because they are hardware-based, these load balancers are less flexible and scalable, so there's a tendency to over-provision hardware load balancers. To implement utility load balancing, builders code "listeners" into the applying that to react to particular occasions, similar to person requests. Listeners route the requests to different targets based on the content material of each request (e.g, common requests to view the appliance, a request to load specific items of the application, etc.). As strain increases on an internet site or enterprise application, ultimately, a single server cannot support the full workload.

Hardware load balancers consist of physical hardware, corresponding to an appliance. These direct traffic to servers primarily based on standards just like the variety of current connections to a server, processor utilization, and server efficiency. When performing community visitors load adjusting with DNS, we don’t have any control over the balancing algorithm. It usually utilizes round-robin trend to dedicate a server it has listed for a given document. The distinctive characteristic of load balancing utilizing DNS is the very restricted DNS caching.

Load‑balancing options used by high‑traffic websites such as Dropbox, Netflix, and Zynga. More than 350 million websites worldwide depend on NGINX Plus and NGINX Open Source to deliver their content material rapidly, reliably, and securely. For extra information about load balancing, see NGINX Load Balancing in the NGINX Plus Admin Guide.

No comments:

Post a Comment